Note

Go to the end to download the full example code or to run this example in your browser via Binder.

Least Squares Image Reconstruction#

An example to show how to reconstruct volumes using the least square estimate.

This script demonstrates the use of the Conjugate Gradient (CG), LSQR and LSMR methods, to reconstruct images from non-uniform k-space data.

import os

import time

from tqdm.auto import tqdm

import cupy as cp

import numpy as np

from brainweb_dl import get_mri

from matplotlib import pyplot as plt

from skimage.metrics import peak_signal_noise_ratio as psnr

import mrinufft

from mrinufft.extras.optim import loss_l2_reg, loss_l2_AHreg

BACKEND = os.environ.get("MRINUFFT_BACKEND", "cufinufft")

Setup Inputs

samples_loc = mrinufft.initialize_2D_spiral(Nc=64, Ns=512, nb_revolutions=8)

ground_truth = get_mri(sub_id=4)

ground_truth = ground_truth[90]

# Normalize the ground truth image

ground_truth = ground_truth / np.sqrt(np.mean(abs(ground_truth) ** 2))

image_gpu = cp.array(ground_truth) # convert to cupy array for GPU processing

print("image size: ", ground_truth.shape)

image size: (256, 256)

Setup the NUFFT operator

NufftOperator = mrinufft.get_operator(BACKEND) # get the operator

nufft = NufftOperator(

samples_loc,

shape=ground_truth.shape,

squeeze_dims=True,

) # create the NUFFT operator

/volatile/github-ci-mind-inria/gpu_mind_runner/_work/mri-nufft/mri-nufft/src/mrinufft/_utils.py:67: UserWarning: Samples will be rescaled to [-pi, pi), assuming they were in [-0.5, 0.5)

warnings.warn(

Reconstruct the image using the CG method

kspace_data_gpu = nufft.op(image_gpu) # get the k-space data

kspace_data = kspace_data_gpu.get() # convert back to numpy array for display

adjoint = nufft.adj_op(kspace_data_gpu).get() # adjoint NUFFT

/volatile/github-ci-mind-inria/gpu_mind_runner/_work/mri-nufft/mri-nufft/.venv/lib/python3.10/site-packages/cufinufft/_plan.py:402: UserWarning: Argument `data` does not satisfy the following requirement: C. Copying array (this may reduce performance)

warnings.warn(f"Argument `{name}` does not satisfy the "

Pseudo-inverse solver#

The least-square solution to the inverse problem can be obtained by solving the following optimization problem:

where \(A\) is the NUFFT operator, \(x\) is the image to be

reconstructed, and \(b\) is the k-space data. The optimization problem can

be solved using different iterative solvers, such as Conjugate Gradient (CG),

LSQR and LSMR. The solvers are implemented in the mrinufft.pinv_solver()

method, which takes as input the k-space data, the maximum number of

iterations, and the optimization method to use.

Callback monitoring#

We can monitor the convergence of the optimization by using a callback function that is called at each iteration of the optimization. The callback function can compute different metrics, such as the residual norm, the PSNR, or the time taken

def mixed_cb(*args, **kwargs):

"""A compound callback function, to track iterations time and convergence."""

return [

time.perf_counter(),

loss_l2_reg(*args, **kwargs),

loss_l2_AHreg(*args, **kwargs),

psnr(

abs(args[0].get().squeeze()),

abs(ground_truth.squeeze()),

data_range=ground_truth.max(),

),

time.perf_counter(),

]

def process_cb_results(cb_results):

t0, r, rH, psnrs, t1 = list(zip(*cb_results))

t1 = (t0[0], *t1[:-1])

time_it = np.cumsum(np.array(t0) - np.array(t1))

r = [rr.get() for rr in r]

rH = [rr.get() for rr in rH]

return {"time": time_it, "res": r, "AHres": rH, "psnr": psnrs}

Run the least-square minimization for all the solvers#

OPTIM = ["cg", "lsqr", "lsmr"]

METRICS = {

"res": r"$\|Ax-b\|$",

"AHres": r"$\|A^H(Ax-b)\|$",

"psnr": "PSNR",

}

MAX_ITER = 1000

images = dict()

iterations_cb = dict()

pg = tqdm(total=MAX_ITER, position=0, leave=True)

for optim in OPTIM:

image, iter_cb = nufft.pinv_solver(

kspace_data=kspace_data_gpu,

max_iter=MAX_ITER,

callback=mixed_cb,

optim=optim,

progressbar=pg,

)

images[optim] = image.get().squeeze() # retrieve image from GPU.

iterations_cb[optim] = process_cb_results(iter_cb)

0%| | 0/1000 [00:00<?, ?it/s]

0%| | 0/1000 [00:00<?, ?it/s]

2%|▏ | 17/1000 [00:00<00:05, 166.30it/s]

3%|▎ | 34/1000 [00:00<00:05, 161.70it/s]

5%|▌ | 51/1000 [00:00<00:07, 127.13it/s]

6%|▋ | 65/1000 [00:00<00:07, 128.74it/s]

8%|▊ | 82/1000 [00:00<00:06, 139.60it/s]

10%|▉ | 98/1000 [00:00<00:06, 143.47it/s]

11%|█▏ | 113/1000 [00:00<00:06, 139.12it/s]

13%|█▎ | 129/1000 [00:00<00:06, 142.83it/s]

15%|█▍ | 147/1000 [00:01<00:05, 153.51it/s]

16%|█▋ | 163/1000 [00:01<00:06, 138.40it/s]

18%|█▊ | 178/1000 [00:01<00:06, 132.59it/s]

19%|█▉ | 192/1000 [00:01<00:06, 131.24it/s]

21%|██ | 207/1000 [00:01<00:05, 133.06it/s]

22%|██▏ | 221/1000 [00:01<00:06, 117.37it/s]

23%|██▎ | 234/1000 [00:01<00:06, 117.78it/s]

25%|██▌ | 252/1000 [00:01<00:05, 132.93it/s]

27%|██▋ | 267/1000 [00:01<00:05, 136.75it/s]

28%|██▊ | 281/1000 [00:02<00:05, 123.63it/s]

30%|██▉ | 295/1000 [00:02<00:05, 127.20it/s]

31%|███ | 311/1000 [00:02<00:05, 134.13it/s]

33%|███▎ | 328/1000 [00:02<00:04, 142.71it/s]

34%|███▍ | 343/1000 [00:02<00:04, 138.60it/s]

36%|███▌ | 359/1000 [00:02<00:04, 143.78it/s]

38%|███▊ | 378/1000 [00:02<00:04, 154.37it/s]

39%|███▉ | 394/1000 [00:02<00:04, 147.57it/s]

41%|████ | 409/1000 [00:03<00:04, 129.32it/s]

42%|████▏ | 424/1000 [00:03<00:04, 134.40it/s]

44%|████▍ | 439/1000 [00:03<00:04, 137.32it/s]

45%|████▌ | 454/1000 [00:03<00:04, 125.35it/s]

47%|████▋ | 467/1000 [00:03<00:04, 125.39it/s]

48%|████▊ | 485/1000 [00:03<00:03, 139.62it/s]

50%|█████ | 500/1000 [00:03<00:03, 131.33it/s]

51%|█████▏ | 514/1000 [00:03<00:03, 122.61it/s]

53%|█████▎ | 530/1000 [00:03<00:03, 132.08it/s]

55%|█████▍ | 547/1000 [00:04<00:03, 140.19it/s]

56%|█████▋ | 565/1000 [00:04<00:02, 147.42it/s]

58%|█████▊ | 580/1000 [00:04<00:02, 141.32it/s]

60%|█████▉ | 598/1000 [00:04<00:02, 151.90it/s]

62%|██████▏ | 615/1000 [00:04<00:02, 156.37it/s]

63%|██████▎ | 631/1000 [00:04<00:02, 146.20it/s]

65%|██████▍ | 647/1000 [00:04<00:02, 147.72it/s]

66%|██████▋ | 664/1000 [00:04<00:02, 152.31it/s]

68%|██████▊ | 682/1000 [00:04<00:02, 158.33it/s]

70%|██████▉ | 698/1000 [00:05<00:02, 146.74it/s]

72%|███████▏ | 716/1000 [00:05<00:01, 154.86it/s]

73%|███████▎ | 733/1000 [00:05<00:01, 157.68it/s]

75%|███████▍ | 749/1000 [00:05<00:01, 152.78it/s]

76%|███████▋ | 765/1000 [00:05<00:01, 150.18it/s]

78%|███████▊ | 782/1000 [00:05<00:01, 153.06it/s]

80%|███████▉ | 799/1000 [00:05<00:01, 157.84it/s]

82%|████████▏ | 815/1000 [00:05<00:01, 146.72it/s]

83%|████████▎ | 832/1000 [00:05<00:01, 149.35it/s]

85%|████████▍ | 849/1000 [00:06<00:00, 154.33it/s]

86%|████████▋ | 865/1000 [00:06<00:00, 153.86it/s]

88%|████████▊ | 881/1000 [00:06<00:00, 145.37it/s]

90%|████████▉ | 897/1000 [00:06<00:00, 149.04it/s]

91%|█████████▏| 914/1000 [00:06<00:00, 153.87it/s]

93%|█████████▎| 930/1000 [00:06<00:00, 151.33it/s]

95%|█████████▍| 946/1000 [00:06<00:00, 150.83it/s]

96%|█████████▋| 963/1000 [00:06<00:00, 155.03it/s]

98%|█████████▊| 981/1000 [00:06<00:00, 160.87it/s]

100%|█████████▉| 998/1000 [00:06<00:00, 161.14it/s]

0%| | 0/1000 [00:00<?, ?it/s]

2%|▏ | 17/1000 [00:00<00:10, 97.65it/s]

3%|▎ | 31/1000 [00:00<00:08, 113.79it/s]

4%|▍ | 43/1000 [00:00<00:08, 115.12it/s]

6%|▌ | 55/1000 [00:00<00:09, 103.31it/s]

7%|▋ | 66/1000 [00:00<00:08, 105.07it/s]

8%|▊ | 78/1000 [00:00<00:08, 107.76it/s]

9%|▉ | 90/1000 [00:00<00:08, 109.98it/s]

10%|█ | 102/1000 [00:00<00:08, 100.87it/s]

11%|█▏ | 114/1000 [00:01<00:08, 105.41it/s]

13%|█▎ | 127/1000 [00:01<00:07, 111.99it/s]

14%|█▍ | 141/1000 [00:01<00:07, 112.62it/s]

15%|█▌ | 153/1000 [00:01<00:08, 101.97it/s]

16%|█▋ | 165/1000 [00:01<00:07, 105.91it/s]

18%|█▊ | 177/1000 [00:01<00:07, 109.48it/s]

19%|█▉ | 189/1000 [00:01<00:07, 105.84it/s]

20%|██ | 200/1000 [00:01<00:07, 103.81it/s]

21%|██▏ | 214/1000 [00:01<00:07, 112.11it/s]

23%|██▎ | 228/1000 [00:02<00:06, 116.89it/s]

24%|██▍ | 240/1000 [00:02<00:07, 99.51it/s]

25%|██▌ | 252/1000 [00:02<00:07, 103.13it/s]

26%|██▋ | 265/1000 [00:02<00:06, 109.54it/s]

28%|██▊ | 278/1000 [00:02<00:06, 106.24it/s]

29%|██▉ | 289/1000 [00:02<00:06, 105.44it/s]

30%|███ | 303/1000 [00:02<00:06, 112.47it/s]

32%|███▏ | 315/1000 [00:02<00:05, 114.20it/s]

33%|███▎ | 327/1000 [00:03<00:05, 114.05it/s]

34%|███▍ | 339/1000 [00:03<00:06, 98.54it/s]

35%|███▌ | 353/1000 [00:03<00:06, 107.53it/s]

36%|███▋ | 365/1000 [00:03<00:05, 109.90it/s]

38%|███▊ | 377/1000 [00:03<00:05, 106.24it/s]

39%|███▉ | 388/1000 [00:03<00:05, 106.76it/s]

40%|████ | 400/1000 [00:03<00:05, 108.02it/s]

41%|████ | 412/1000 [00:03<00:05, 110.03it/s]

42%|████▏ | 424/1000 [00:03<00:05, 98.93it/s]

44%|████▎ | 436/1000 [00:04<00:05, 103.58it/s]

45%|████▍ | 449/1000 [00:04<00:04, 110.28it/s]

46%|████▋ | 463/1000 [00:04<00:04, 116.60it/s]

48%|████▊ | 475/1000 [00:04<00:05, 102.46it/s]

49%|████▊ | 487/1000 [00:04<00:04, 105.54it/s]

0%| | 0/1000 [00:00<?, ?it/s]

1%| | 11/1000 [00:00<00:10, 98.55it/s]

2%|▏ | 21/1000 [00:00<00:13, 73.41it/s]

3%|▎ | 33/1000 [00:00<00:10, 88.31it/s]

4%|▍ | 45/1000 [00:00<00:09, 96.85it/s]

6%|▌ | 56/1000 [00:00<00:11, 83.50it/s]

6%|▋ | 65/1000 [00:00<00:11, 83.51it/s]

8%|▊ | 76/1000 [00:00<00:10, 89.10it/s]

9%|▊ | 87/1000 [00:00<00:09, 94.78it/s]

10%|▉ | 97/1000 [00:01<00:10, 82.96it/s]

11%|█ | 108/1000 [00:01<00:10, 88.10it/s]

12%|█▏ | 119/1000 [00:01<00:09, 90.86it/s]

13%|█▎ | 129/1000 [00:01<00:09, 92.71it/s]

14%|█▍ | 139/1000 [00:01<00:10, 83.67it/s]

15%|█▌ | 150/1000 [00:01<00:09, 90.25it/s]

16%|█▌ | 160/1000 [00:01<00:09, 89.72it/s]

17%|█▋ | 170/1000 [00:01<00:09, 83.60it/s]

18%|█▊ | 180/1000 [00:02<00:09, 86.84it/s]

19%|█▉ | 189/1000 [00:02<00:09, 86.58it/s]

20%|█▉ | 198/1000 [00:02<00:09, 83.00it/s]

21%|██ | 207/1000 [00:02<00:10, 77.57it/s]

22%|██▏ | 217/1000 [00:02<00:09, 82.52it/s]

23%|██▎ | 228/1000 [00:02<00:08, 88.25it/s]

24%|██▎ | 237/1000 [00:02<00:09, 83.19it/s]

25%|██▍ | 246/1000 [00:02<00:08, 84.02it/s]

26%|██▌ | 256/1000 [00:02<00:08, 86.42it/s]

27%|██▋ | 267/1000 [00:03<00:08, 90.88it/s]

28%|██▊ | 277/1000 [00:03<00:08, 81.21it/s]

29%|██▉ | 288/1000 [00:03<00:08, 86.98it/s]

30%|███ | 300/1000 [00:03<00:07, 93.53it/s]

31%|███ | 310/1000 [00:03<00:08, 79.65it/s]

32%|███▏ | 320/1000 [00:03<00:08, 82.50it/s]

33%|███▎ | 330/1000 [00:03<00:07, 86.54it/s]

34%|███▍ | 339/1000 [00:03<00:07, 83.39it/s]

35%|███▍ | 348/1000 [00:04<00:07, 83.78it/s]

36%|███▌ | 358/1000 [00:04<00:07, 87.21it/s]

37%|███▋ | 369/1000 [00:04<00:06, 92.62it/s]

38%|███▊ | 379/1000 [00:04<00:07, 80.86it/s]

39%|███▉ | 389/1000 [00:04<00:07, 85.55it/s]

40%|███▉ | 399/1000 [00:04<00:06, 88.51it/s]

41%|████ | 409/1000 [00:04<00:07, 83.54it/s]

42%|████▏ | 418/1000 [00:04<00:07, 82.33it/s]

43%|████▎ | 430/1000 [00:04<00:06, 90.35it/s]

44%|████▍ | 441/1000 [00:05<00:05, 95.25it/s]

45%|████▌ | 451/1000 [00:05<00:06, 82.66it/s]

46%|████▌ | 461/1000 [00:05<00:06, 85.58it/s]

47%|████▋ | 472/1000 [00:05<00:05, 89.55it/s]

48%|████▊ | 482/1000 [00:05<00:06, 78.93it/s]

49%|████▉ | 494/1000 [00:05<00:05, 87.29it/s]

50%|█████ | 505/1000 [00:05<00:05, 92.49it/s]

52%|█████▏ | 515/1000 [00:05<00:05, 83.12it/s]

52%|█████▏ | 524/1000 [00:06<00:05, 82.53it/s]

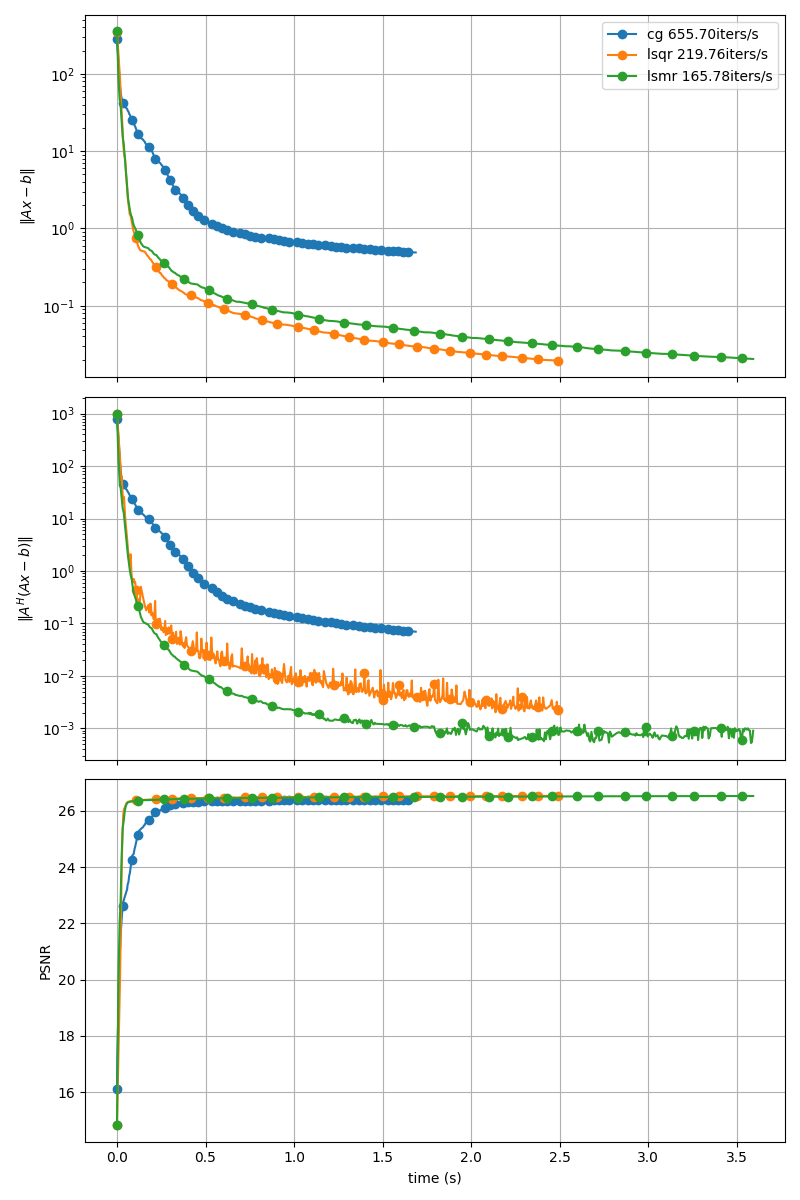

Display Convergence#

fig, axs = plt.subplots(len(METRICS), 1, sharex=True, figsize=(8, 12))

for i, metric in enumerate(METRICS):

for optim in OPTIM:

if "res" in metric:

axs[i].set_yscale("log")

axs[i].plot(

iterations_cb[optim]["time"],

iterations_cb[optim][metric],

marker="o",

markevery=20,

label=f"{optim} {np.mean(1/np.diff(iterations_cb[optim]['time'])):.2f}iters/s",

)

axs[i].grid()

axs[i].set_ylabel(METRICS[metric])

axs[0].legend()

axs[-1].set_xlabel("time (s)")

fig.tight_layout()

plt.show()

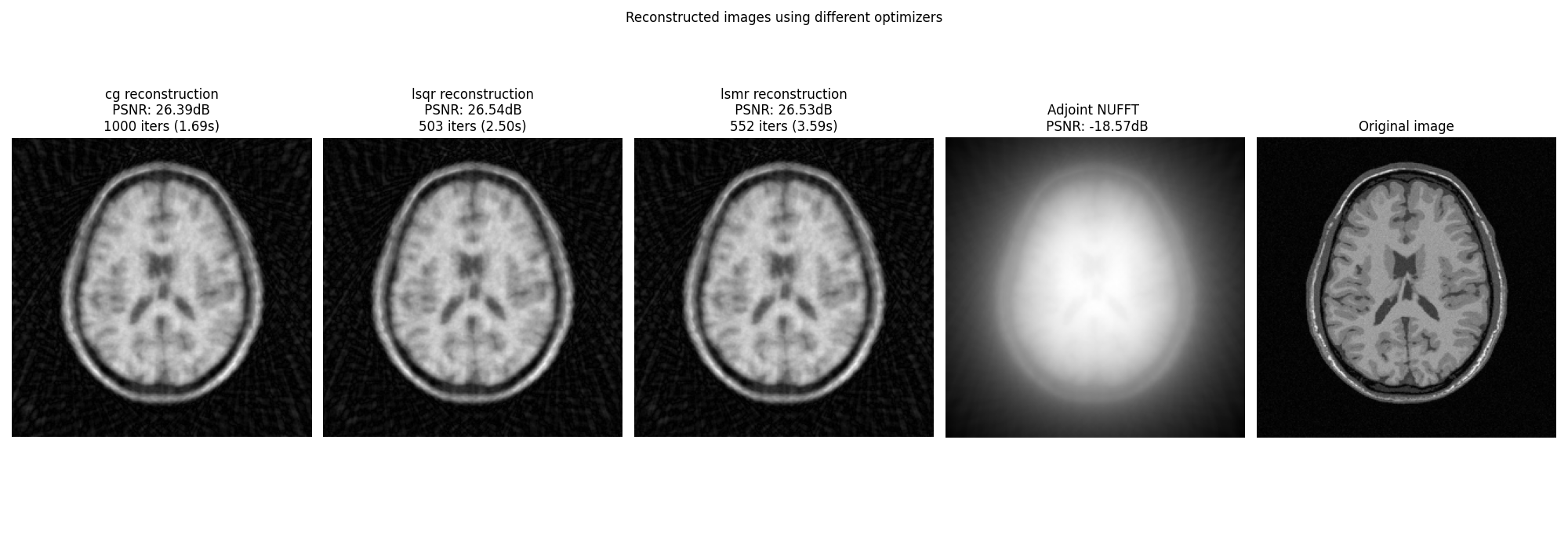

Display images#

fig, axs = plt.subplots(1, len(OPTIM) + 2, figsize=(20, 7))

for i, optim in enumerate(OPTIM):

axs[i].imshow(abs(images[optim]), cmap="gray", origin="lower")

axs[i].axis("off")

axs[i].set_title(

f"{optim} reconstruction\n PSNR: {iterations_cb[optim]['psnr'][-1]:.2f}dB \n"

f"{len(iterations_cb[optim]['time'])} iters ({iterations_cb[optim]['time'][-1]:.2f}s)"

)

axs[-1].imshow(abs(ground_truth), cmap="gray", origin="lower")

axs[-1].axis("off")

axs[-1].set_title("Original image")

axs[-2].imshow(

abs(adjoint),

cmap="gray",

origin="lower",

)

axs[-2].axis("off")

axs[-2].set_title(

f"Adjoint NUFFT \n PSNR: {psnr(abs(adjoint), abs(ground_truth), data_range=ground_truth.max()):.2f}dB"

)

fig.suptitle("Reconstructed images using different optimizers")

fig.tight_layout()

plt.show()

Using a damping regularization term#

The least-square problem can be regularized using a damping term to improve the conditioning of the problem. This is done by solving the following optimization problem:

# \min_x \|Ax - b\|_2^2 + \gamma \|x\|_2^2

# where :math:`\gamma` is the regularization parameter.

images = dict()

iterations_cb = dict()

pg = tqdm(total=MAX_ITER, position=0, leave=True)

for optim in OPTIM:

image, iter_cb = nufft.pinv_solver(

kspace_data=kspace_data_gpu,

max_iter=1000,

callback=mixed_cb,

damp=0.1,

optim=optim,

progressbar=pg,

)

images[optim] = image.get().squeeze() # retrieve image from GPU.

iterations_cb[optim] = process_cb_results(iter_cb)

0%| | 0/1000 [00:00<?, ?it/s]

53%|█████▎ | 534/1000 [00:07<00:06, 66.84it/s]

0%| | 0/1000 [00:00<?, ?it/s]

1%|▏ | 14/1000 [00:00<00:07, 131.62it/s]

3%|▎ | 30/1000 [00:00<00:06, 145.01it/s]

4%|▍ | 45/1000 [00:00<00:07, 129.01it/s]

6%|▌ | 62/1000 [00:00<00:06, 140.82it/s]

8%|▊ | 78/1000 [00:00<00:06, 146.20it/s]

10%|▉ | 96/1000 [00:00<00:05, 156.69it/s]

11%|█ | 112/1000 [00:00<00:06, 141.61it/s]

13%|█▎ | 127/1000 [00:00<00:06, 143.88it/s]

14%|█▍ | 143/1000 [00:00<00:05, 146.20it/s]

16%|█▌ | 159/1000 [00:01<00:05, 147.96it/s]

17%|█▋ | 174/1000 [00:01<00:05, 139.14it/s]

19%|█▉ | 191/1000 [00:01<00:05, 146.03it/s]

21%|██ | 208/1000 [00:01<00:05, 151.44it/s]

22%|██▏ | 224/1000 [00:01<00:05, 143.00it/s]

24%|██▍ | 239/1000 [00:01<00:05, 141.91it/s]

26%|██▌ | 255/1000 [00:01<00:05, 144.86it/s]

27%|██▋ | 271/1000 [00:01<00:04, 148.41it/s]

29%|██▊ | 286/1000 [00:02<00:05, 136.36it/s]

30%|███ | 300/1000 [00:02<00:05, 132.82it/s]

32%|███▏ | 316/1000 [00:02<00:04, 138.49it/s]

33%|███▎ | 333/1000 [00:02<00:04, 143.31it/s]

35%|███▍ | 348/1000 [00:02<00:04, 137.20it/s]

36%|███▋ | 363/1000 [00:02<00:04, 140.10it/s]

38%|███▊ | 379/1000 [00:02<00:04, 144.57it/s]

39%|███▉ | 394/1000 [00:02<00:04, 143.89it/s]

41%|████ | 409/1000 [00:02<00:04, 139.33it/s]

42%|████▎ | 425/1000 [00:02<00:03, 144.93it/s]

44%|████▍ | 442/1000 [00:03<00:03, 151.79it/s]

46%|████▌ | 458/1000 [00:03<00:03, 143.31it/s]

47%|████▋ | 473/1000 [00:03<00:03, 141.25it/s]

49%|████▉ | 489/1000 [00:03<00:03, 145.58it/s]

51%|█████ | 507/1000 [00:03<00:03, 153.20it/s]

52%|█████▏ | 523/1000 [00:03<00:03, 138.16it/s]

54%|█████▍ | 538/1000 [00:03<00:03, 140.32it/s]

56%|█████▌ | 555/1000 [00:03<00:03, 147.46it/s]

57%|█████▋ | 571/1000 [00:03<00:02, 149.08it/s]

59%|█████▊ | 587/1000 [00:04<00:02, 141.11it/s]

60%|██████ | 602/1000 [00:04<00:02, 142.70it/s]

62%|██████▏ | 618/1000 [00:04<00:02, 145.47it/s]

63%|██████▎ | 633/1000 [00:04<00:02, 142.58it/s]

65%|██████▍ | 648/1000 [00:04<00:02, 138.54it/s]

67%|██████▋ | 666/1000 [00:04<00:02, 148.81it/s]

68%|██████▊ | 683/1000 [00:04<00:02, 153.42it/s]

70%|██████▉ | 699/1000 [00:04<00:01, 151.99it/s]

72%|███████▏ | 715/1000 [00:04<00:01, 152.79it/s]

73%|███████▎ | 731/1000 [00:05<00:01, 151.70it/s]

75%|███████▍ | 749/1000 [00:05<00:01, 158.41it/s]

76%|███████▋ | 765/1000 [00:05<00:01, 154.37it/s]

78%|███████▊ | 782/1000 [00:05<00:01, 156.95it/s]

80%|███████▉ | 799/1000 [00:05<00:01, 159.80it/s]

82%|████████▏ | 816/1000 [00:05<00:01, 157.13it/s]

83%|████████▎ | 832/1000 [00:05<00:01, 156.65it/s]

85%|████████▍ | 848/1000 [00:05<00:00, 155.58it/s]

86%|████████▋ | 865/1000 [00:05<00:00, 159.21it/s]

88%|████████▊ | 881/1000 [00:06<00:00, 152.96it/s]

90%|████████▉ | 898/1000 [00:06<00:00, 155.99it/s]

92%|█████████▏| 916/1000 [00:06<00:00, 161.09it/s]

93%|█████████▎| 933/1000 [00:06<00:00, 160.83it/s]

95%|█████████▌| 950/1000 [00:06<00:00, 158.01it/s]

97%|█████████▋| 966/1000 [00:06<00:00, 156.72it/s]

98%|█████████▊| 983/1000 [00:06<00:00, 157.98it/s]

100%|█████████▉| 999/1000 [00:06<00:00, 143.72it/s]

0%| | 0/1000 [00:00<?, ?it/s]

2%|▏ | 15/1000 [00:00<00:08, 118.89it/s]

3%|▎ | 27/1000 [00:00<00:08, 111.88it/s]

4%|▍ | 39/1000 [00:00<00:08, 110.23it/s]

5%|▌ | 50/1000 [00:00<00:09, 103.96it/s]

6%|▌ | 62/1000 [00:00<00:08, 108.12it/s]

7%|▋ | 74/1000 [00:00<00:08, 109.34it/s]

9%|▊ | 86/1000 [00:00<00:08, 112.53it/s]

0%| | 0/1000 [00:00<?, ?it/s]

1%| | 12/1000 [00:00<00:10, 96.26it/s]

2%|▏ | 22/1000 [00:00<00:10, 92.36it/s]

3%|▎ | 32/1000 [00:00<00:10, 92.73it/s]

4%|▍ | 42/1000 [00:00<00:10, 92.67it/s]

5%|▌ | 52/1000 [00:00<00:10, 91.22it/s]

6%|▋ | 63/1000 [00:00<00:09, 94.61it/s]

7%|▋ | 74/1000 [00:00<00:09, 97.73it/s]

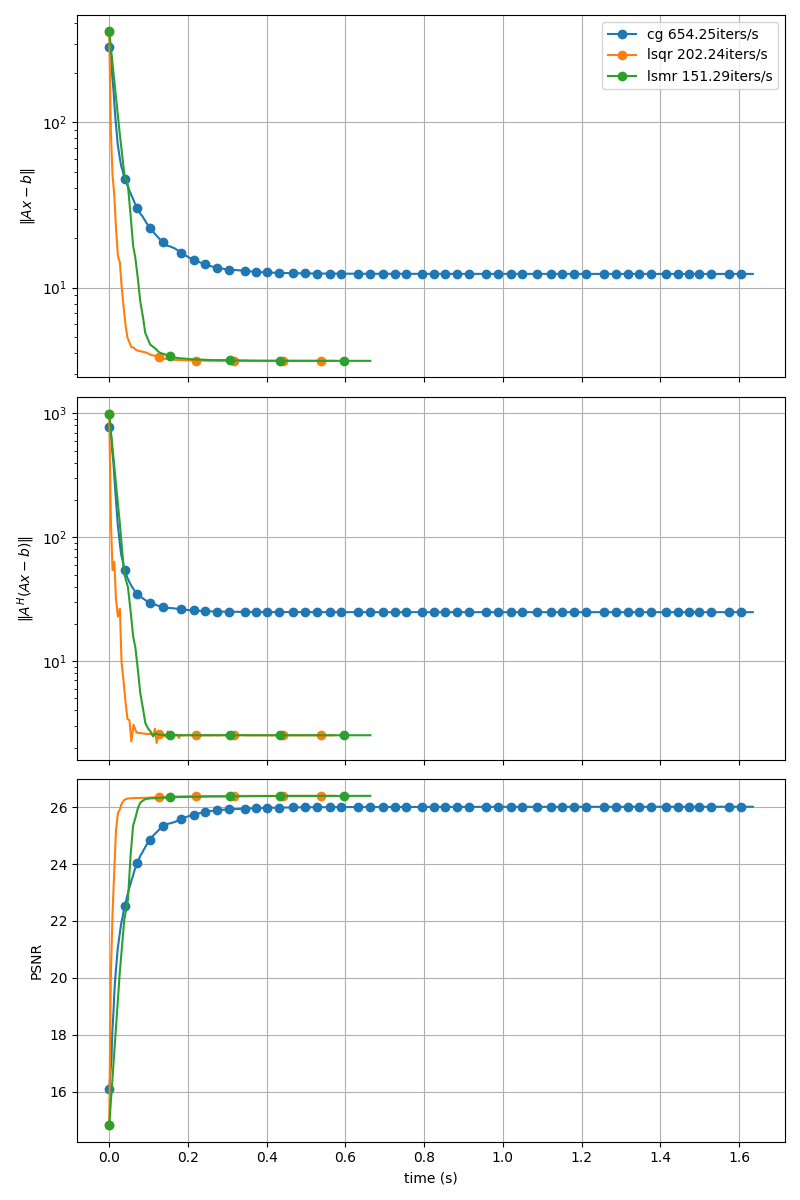

Display Convergence#

fig, axs = plt.subplots(len(METRICS), 1, sharex=True, figsize=(8, 12))

for i, metric in enumerate(METRICS):

for optim in OPTIM:

if "res" in metric:

axs[i].set_yscale("log")

axs[i].plot(

iterations_cb[optim]["time"],

iterations_cb[optim][metric],

marker="o",

markevery=20,

label=f"{optim} {np.mean(1/np.diff(iterations_cb[optim]['time'])):.2f}iters/s",

)

axs[i].grid()

axs[i].set_ylabel(METRICS[metric])

axs[0].legend()

axs[-1].set_xlabel("time (s)")

fig.tight_layout()

plt.show()

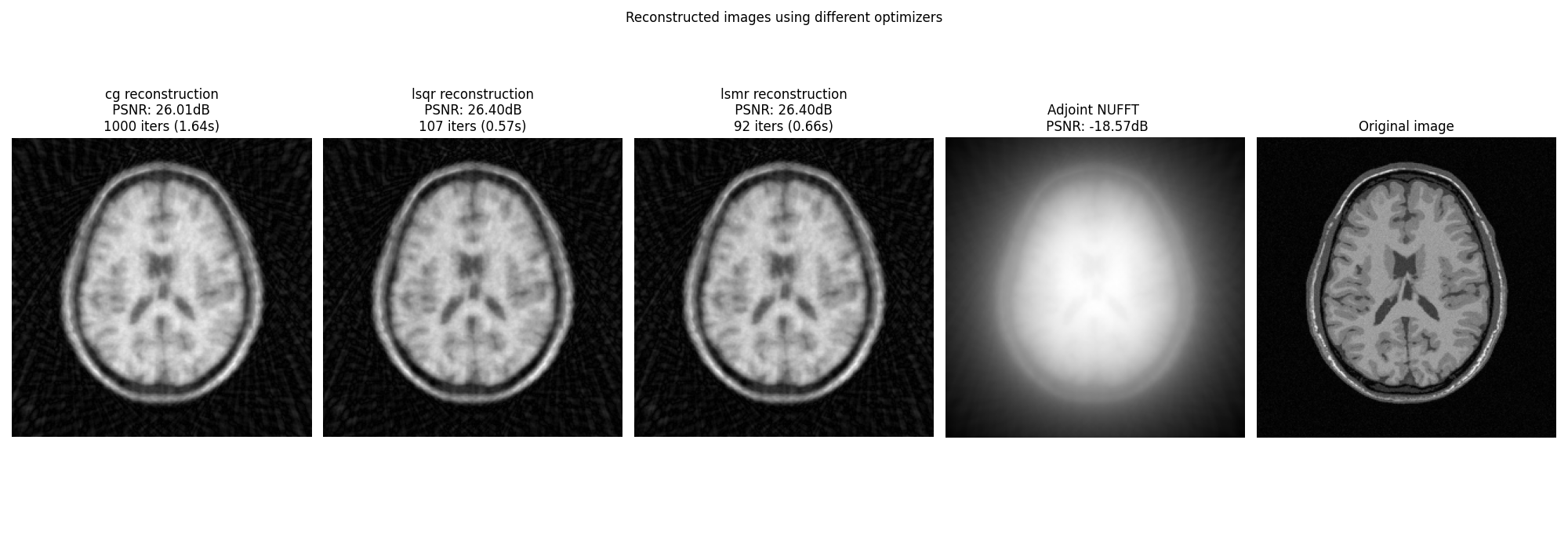

Display images#

fig, axs = plt.subplots(1, len(OPTIM) + 2, figsize=(20, 7))

for i, optim in enumerate(OPTIM):

axs[i].imshow(abs(images[optim]), cmap="gray", origin="lower")

axs[i].axis("off")

axs[i].set_title(

f"{optim} reconstruction\n PSNR: {iterations_cb[optim]['psnr'][-1]:.2f}dB \n"

f"{len(iterations_cb[optim]['time'])} iters ({iterations_cb[optim]['time'][-1]:.2f}s)"

)

axs[-1].imshow(abs(ground_truth), cmap="gray", origin="lower")

axs[-1].axis("off")

axs[-1].set_title("Original image")

axs[-2].imshow(

abs(adjoint),

cmap="gray",

origin="lower",

)

axs[-2].axis("off")

axs[-2].set_title(

f"Adjoint NUFFT \n PSNR: {psnr(abs(adjoint), abs(ground_truth), data_range=ground_truth.max()):.2f}dB"

)

fig.suptitle("Reconstructed images using different optimizers")

fig.tight_layout()

plt.show()

Total running time of the script: (0 minutes 29.510 seconds)